OPPO takes home a remarkable 12 awards at CVPR 2021

From computational intelligence to human-centric intelligence, OPPO is exploring more advanced AI technology that can better understand and assist people

24 June 2021, SINGAPORE – Leading global smartphone brand, OPPO, has been recognised with a total of 12 awards at the recent Computer Vision and Pattern Recognition Conference (CVPR) 2021, demonstrating the company's industry-leading technological strengths and innovative breakthroughs in AI. OPPO's achievements in AI were awarded in seven major challenges across 12 different contests, in which OPPO gained one first-place, seven second-place, and four third-place awards.

The team participating in the CVPR 2021 competition on behalf of OPPO came from the Intelligent Perception, Interaction Department and the OPPO US Research Centre of OPPO Research Institute, whose entries in the six major Challenges of Multi-Agent Behaviour, SoccerNet, LOVEU, AVA-Kinetics, MMact, and People in Context ended with excellent results. Through the optimisation and training of AI algorithms, the team's work continues to strengthen OPPO's AI capabilities and the ability of its AI technology to better serve people.

Eric Guo, Chief Scientist, Intelligent Perception, OPPO, said, "We are very pleased to have achieved such remarkable results again in this year's CVPR Challenges, following our inaugural participation in CVPR 2020. Last year, we won first place in Perceptual Extreme Super-Resolution Challenge by demonstrating technology that can sharpen the appearance of blurry images and in Visual Localization for Handheld Devices Challenge that makes fusion positioning more precise. The Challenges won by OPPO this year, such as Multi-Agent Behavior, AVA-Kinetics, and 3D Face Reconstruction from Multiple 2D Images, cover more complex and advanced areas of computer vision, including behaviour detection, localisation of human actions in space and time, and facial detection. "

"These technologies can be used in a whole range of scenarios such as manufacturing, home, office, photography, health, and mobility," added Guo. "At OPPO, we are committed to making AI better serve people, providing users with more intelligent and convenient experiences."

Among its 12 honours, OPPO received three awards in the Multi-Agent Behavior Challenge, which assesses an AI model's ability to understand, define, and predict complex interactions between intelligent agents such as animals and human beings. OPPO ultimately won the first-place in the Learning New Behaviour category, second-place in Classical Classification, and third-place in Annotation Style Transfer, distinguishing itself from over 240 other participants thanks to its leading AI capabilities. This same technology is currently playing an essential role in OPPO's factory, where the algorithms assist workers in reducing operational mistakes, particularly in key production steps, to ensure their own safety as well as the quality of products coming off the production line.

From computational intelligence to human-centric intelligence, OPPO improves AI's ability to understand people

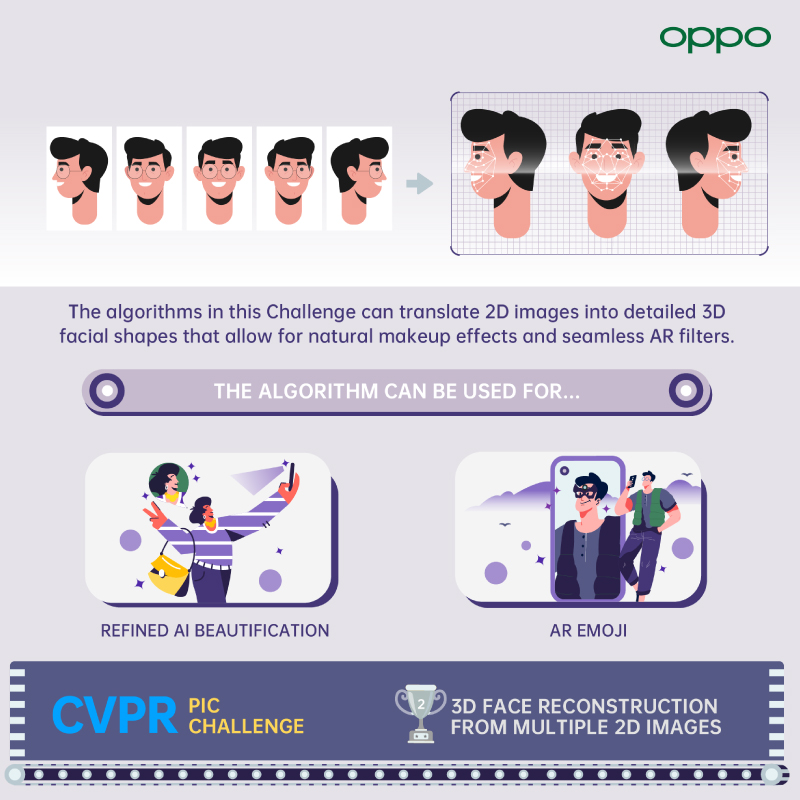

Through its mission of "Technology for Mankind, Kindness for the World," OPPO is building capabilities in human-centric AI. In the 3D Face Reconstruction from Multiple 2D Images Challenge, OPPO's self-developed AI algorithm was able to reconstruct 3D facial shapes with an error of around 1mm, leading it to achieve second place in the main index score ranking. OPPO's technology overcomes problems associated with unclear facial features, exaggerated expressions, and even damaged image data caused by real-life movements, especially in dynamic videos, to produce more accurate 3D facial models.

OPPO's self-developed facial detection algorithm is able to identify 635 key features points at a rate of 30 times per second. This same algorithm architecture is used to power the AI makeup video feature found on the upcoming OPPO Reno6 smartphone, which allows users to easily create dynamic and natural beauty effects in their videos. This technology will promote the evolution of portrait video technology, with 3D feature recognition making makeup effects and filters appear more lifelike and personalised. It will also allow for richer and more seamless AR filters on social platforms, allowing users to experience the cutting-edge technology through everyday moments.

3D Face Reconstruction from Multiple 2D Images

3D Face Reconstruction from Multiple 2D ImagesAI that understands space and time

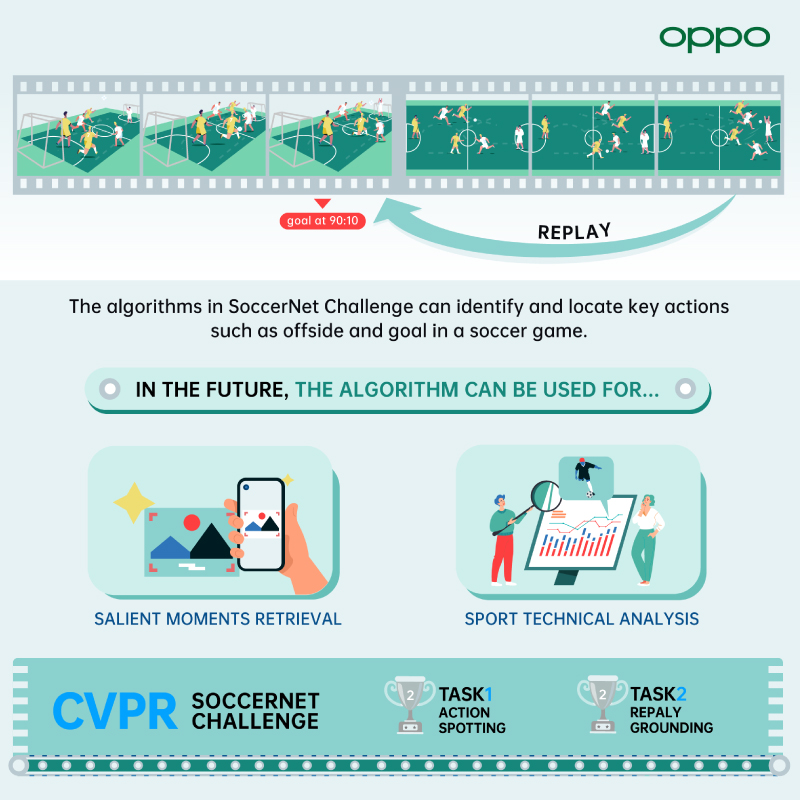

OPPO's AI capabilities have already developed to the stage where they are able to recognise human actions in space and time. In the SoccerNet Challenge, OPPO took second place in both the Action Spotting and Replay Grounding tasks. The purpose of the challenge was to evaluate the ability of the algorithms to identify more than a dozen key actions in a video of a soccer game, including offside and red card violations, which are usually difficult for humans to recognize due to the complexity of the rules and the subtle interpretations of them. To be effective, the AI algorithm also needs to account for other variables such as different camera angles, as well as accurately retrieve the timestamp of the action shown in a given replay shot within the original game. The future applications of this technology are wide-reaching and will help improve the experience for sports lovers through features such as automatically-generated match highlights. In a similar fashion, the technology can also be used to automatically create highlights of a user's life such as weekly highlight clips by analysing the videos on their smartphone.

SoccerNet Challenge

SoccerNet ChallengeIn the MMact Challenge, OPPO took second place in both the Cross-Modal Action Recognition and Cross-Model Action Temporal Localization tasks. OPPO's powerful AI algorithm can accurately recognise more than ten types of actions in a video, such as talking, crouching, and walking, using only visual data. This technology is expected to be widely adopted in smart homes in the future, with benefits including the ability to better take care of the children, pets, the elderly, or other vulnerable groups at home. For example, the AI can alert parents in another room as soon as a baby or child exhibits actions that could be potentially dangerous.

OPPO also won third place in the AVA-Kinetics Challenge, which makes use of the industry's first dataset to include both space and time information. The Challenge's Positioning competition has long been one of the most popular competitions in the field of artificial intelligence, with competitors coming from top international technology companies and universities. The AVA-Kinetics algorithm can not only accurately identify the various behavior of people in the video, but also note their time and position. As a result, OPPO's AI technology not only understands what you are doing but also where and when you are doing it.

OPPO continues to explore the frontiers of AI technology

At this year's CVPR, OPPO also achieved a new milestone in the more cutting-edge academic challenges by securing two third-place awards in the LOVEU (Long-form Video Understanding) challenge. The LOVEU challenge requires AI technology to understand the content of a video and segment it into chunks without being given pre-defined categories. Given the huge possible variety of content, the challenge poses a significant test for the ability of AI algorithms to be applied to more generalized situations: The AI needs to think like a human being, understand colors, objects, human actions, and even light in the video, and make judgments on how these change over time. In the future, this technology has the potential to be widely used as the foundation for further AI tasks in video processing, such as facial detection and behavior recognition.

OPPO US Research Centre also participated in the Dense Depth for Autonomous Driving Challenge, demonstrating its technology that can output dense 3D depth information based on a 2D image. OPPO won second place in the Self-supervised track and took home the "Novelty Award." This technology uses deep learning models to directly output depth information from regular images, and it has the potential to replace depth sensors such as ToF in the future to bring better indoor and outdoor navigation experiences.